Lumens.ai v1.3: Introducing the Lumens Visuals Co-Pilot for Music Fans, Artists, and Creators

When your favorite track drops… where are you?

-

On a neon-lit rooftop in Tokyo?

-

Inside a cosmic cathedral of bass?

-

At the center of a holographic desert festival somewhere between Burning Man and another dimension?

For most of music history, those worlds lived only in imagination. Music could take you there emotionally, but the environments themselves remained invisible.

Today that changes.

With the Lumens 1.3, we’re introducing the Lumens GenAI Co-Pilot — a powerful new creative surface inside the Lumens.ai app that allows fans, artists, and visuals creators to generate cinematic music worlds in seconds.

Using the world’s most advanced image and video AI models, the Lumens GenAI Co-Pilot transforms imagination into visual reality, helping fans express how they see and feel the music they love.

Fans want more than just the sound of music. They want multi-sensory experiences.

From Listener to Cinematic World Builder

The environments surrounding music — lighting, stage design, visuals, architecture — shape how music feels.

Think about a festival stage.

A nightclub.

A concert arena.

Your brain is wired for this. Nearly 80% of the information we process is visual, meaning visuals dramatically amplify the emotional power of music.

The Lumens upgraded their AI superpowers around a simple idea:

What if fans could create animated musical environments themselves?

Share with their favorite dance music producers.

Remix memes and culture.

Inspire visuals and VJs.

Everyone contributes to the creative process. With the Lumens, fans move beyond passive listening and become world builders — co-designing the visual environments where their favorite music lives.

Human-Directed, AI Co-Pilot Generated.

Generative AI is unlocking extraordinary creative capabilities — but the most powerful results still come from humans directing and integrating connecting fans to culture.

The Lumens partner with humans creators to expand creativity, not replace it.

Artists bring the vision.

Fans bring imagination.

AI accelerates exploration and asset creation.

(Lumens.ai style transfer: pixel art → IRL → LED Visuals)

(Lumens.ai style transfer: pixel art → IRL → LED Visuals)

The Lumens GenAI Co-Pilot is designed to support that creative collaboration — enabling fans to experiment, iterate, and shape visuals that amplify the enjoyment of dance music with visuals.

Built for Artists, VJs, and Live Show Production

While the GenAI Co-Pilot is accessible to fans, the Lumens also introduce powerful capabilities specifically designed for music artists, visuals creators and stage designers.

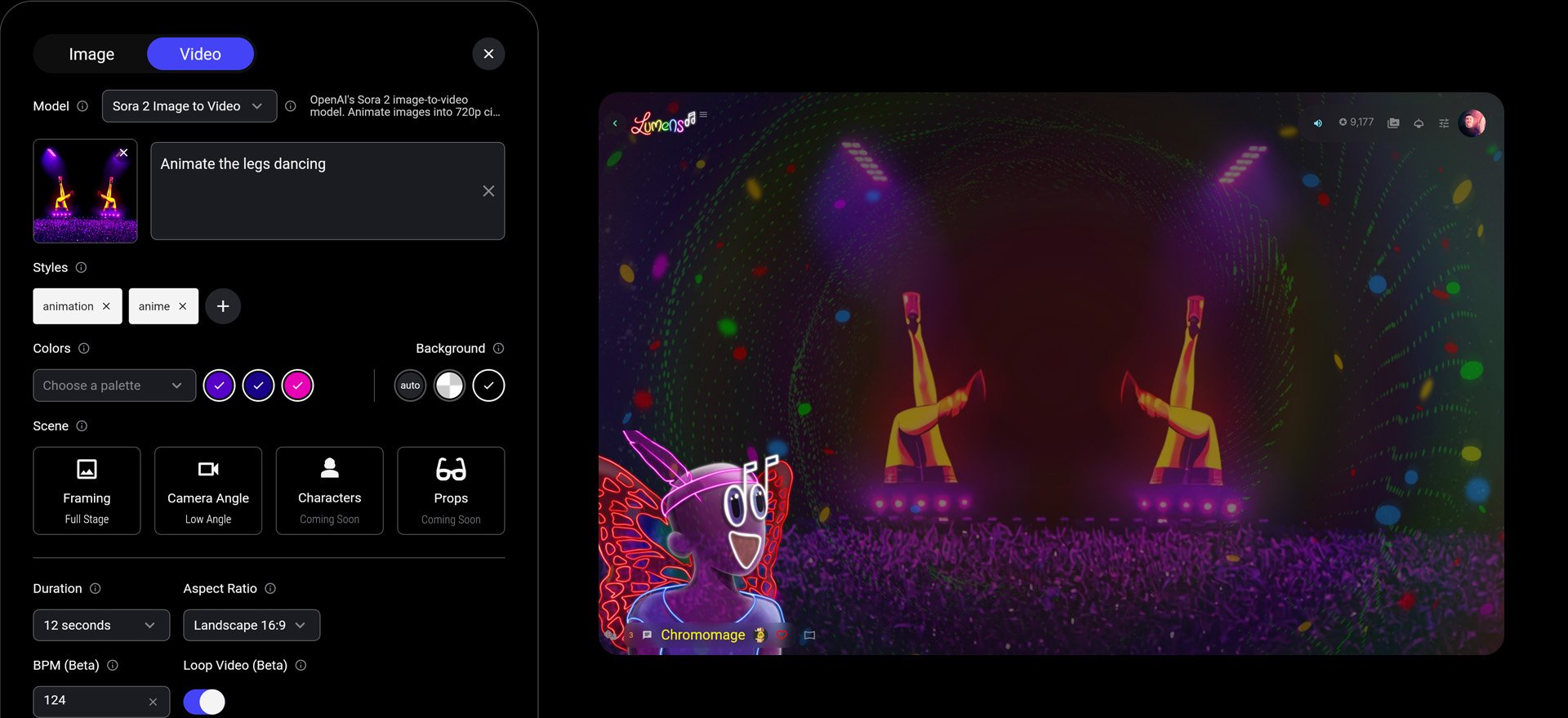

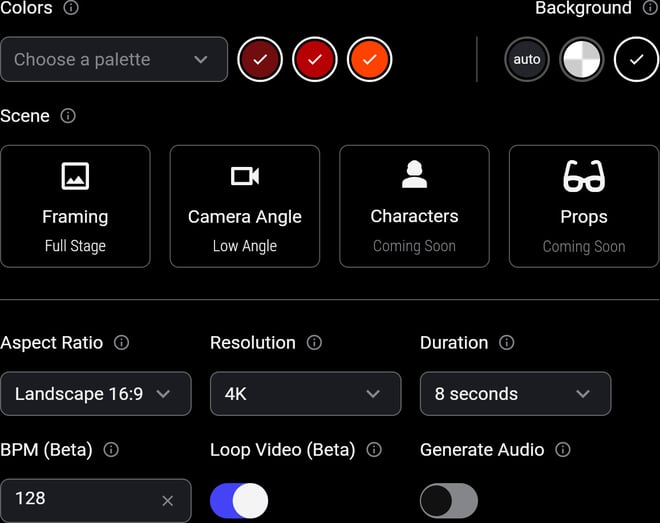

New generation tools allow creators to:

- generate looping videos with BPM-timed FX (in Beta)

- create visuals with clean black backgrounds for LED walls

- set color palettes to match artist branding

-

remove backgrounds from user-uploaded footage or AI-generated visuals

-

produce images with transparent backgrounds for compositing

These capabilities are especially useful for VJs working with software like Resolume, where clean visual layers and precise color control are critical.

This makes the Lumens a powerful tool for rapid show concept development, allowing artists and production teams to prototype visual ideas for tours, festivals, and immersive stage environments.

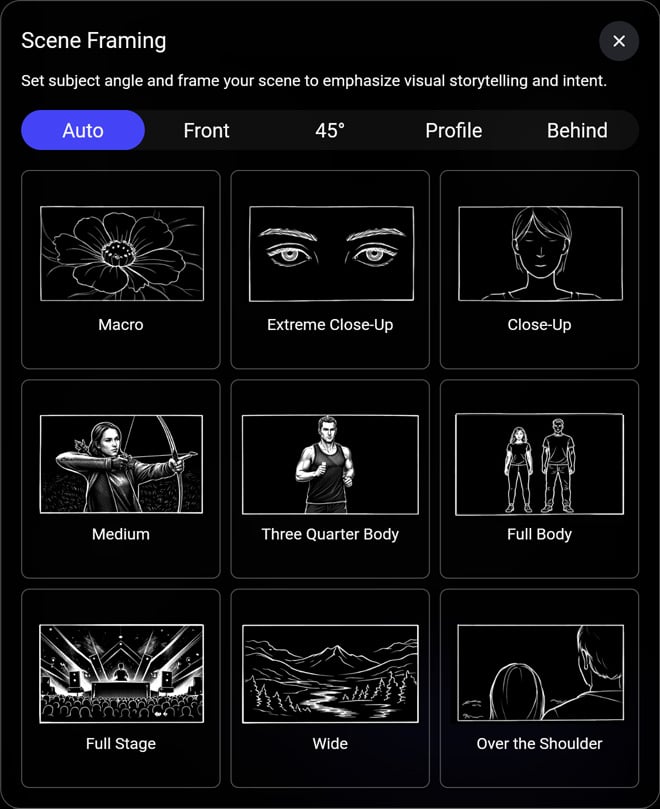

Cinematography Tools That Teach While You Create

One challenge with generative media tools is that prompts alone often produce unpredictable results.

The Lumens solve this by introducing guided cinematography controls that help users learn visual storytelling and shot framing while improving generation accuracy.

Instead of relying on vague prompts, users can specify how a shot should be composed — producing much more consistent visual outputs.

These tools help fans gradually upskill into visual storytellers and cinematographers, while also giving professionals precise creative control.

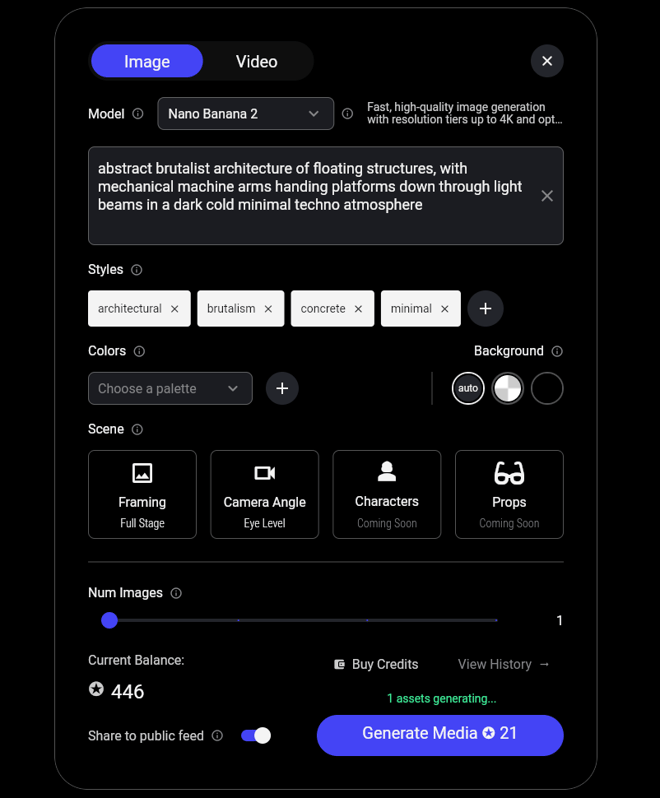

Built on the World’s Most Advanced Generative Models

The Lumens GenAI Co-Pilot launches with a curated set of the most powerful generative media models available today — giving creators access to both professional-grade visual quality and cost-efficient experimentation.

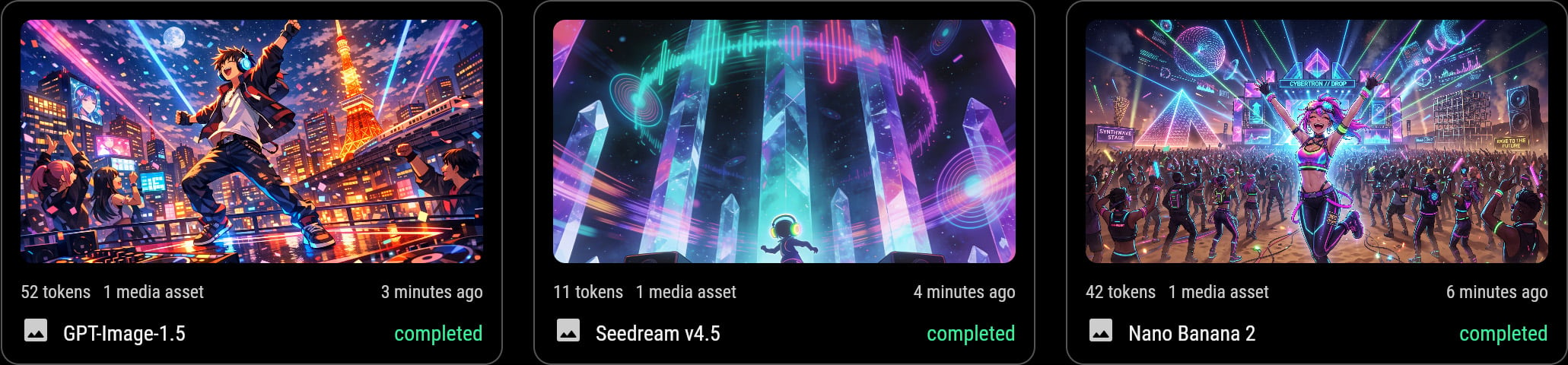

Image Generation Models

-

Nano Banana 2 (Google)

-

GPT-Image-1.5 (OpenAI)

-

Seedream 4.5 (ByteDance)

-

FLUX models for lower-cost exploration

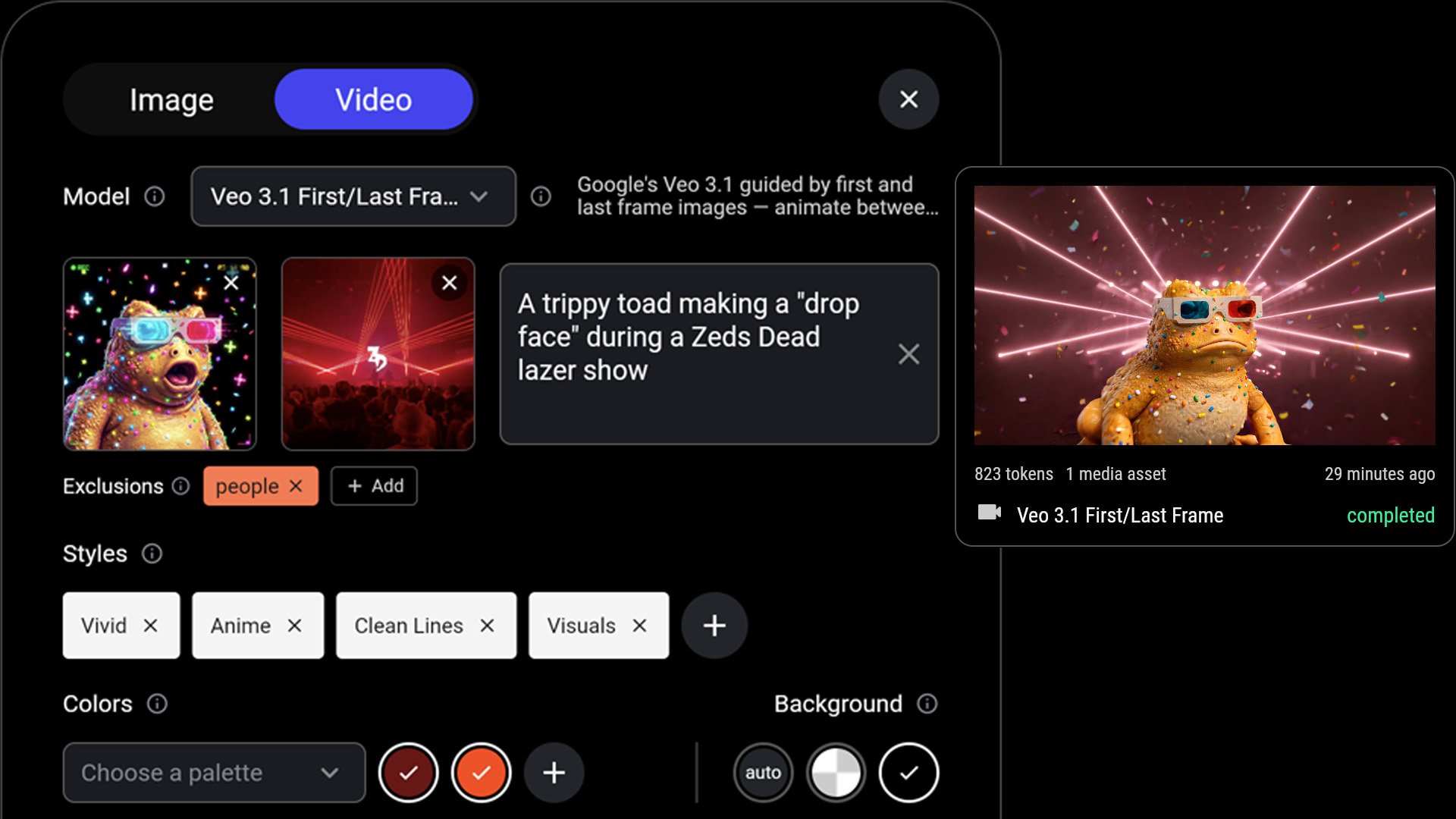

Video Generation Models

-

Veo 3.1 (Google)

-

Kling 03

-

Sora 2 (OpenAI)

-

Seedance 1.5 (ByteDance)

-

WAN models for lightweight generation

- LTX 2.3

Instead of jumping between multiple AI platforms, the Lumens bring these capabilities directly into the music experience.

Explore how the Lumens combine the world’s most advanced image and video AI models inside a single creative interface. Try the Lumens App.

Unlocking Fan Co-Creation with GenAI Co-Pilots

The Console gives fans and creators the ability to generate cinematic visuals and environments directly in context of the fan experience.

-

Dream music spaces

-

Cinematic stage environments

-

Album-inspired visual worlds

- Touring venue pre-visualization

All generated in seconds using simple prompts and intuitive camera controls.

Whether you’re imagining a serene ambient sanctuary or a chaotic cyberpunk rave universe, the Lumens GenAI Co-Pilot transforms those ideas into visuals you can refine, remix, and share.

Designing Your Dream Music Space

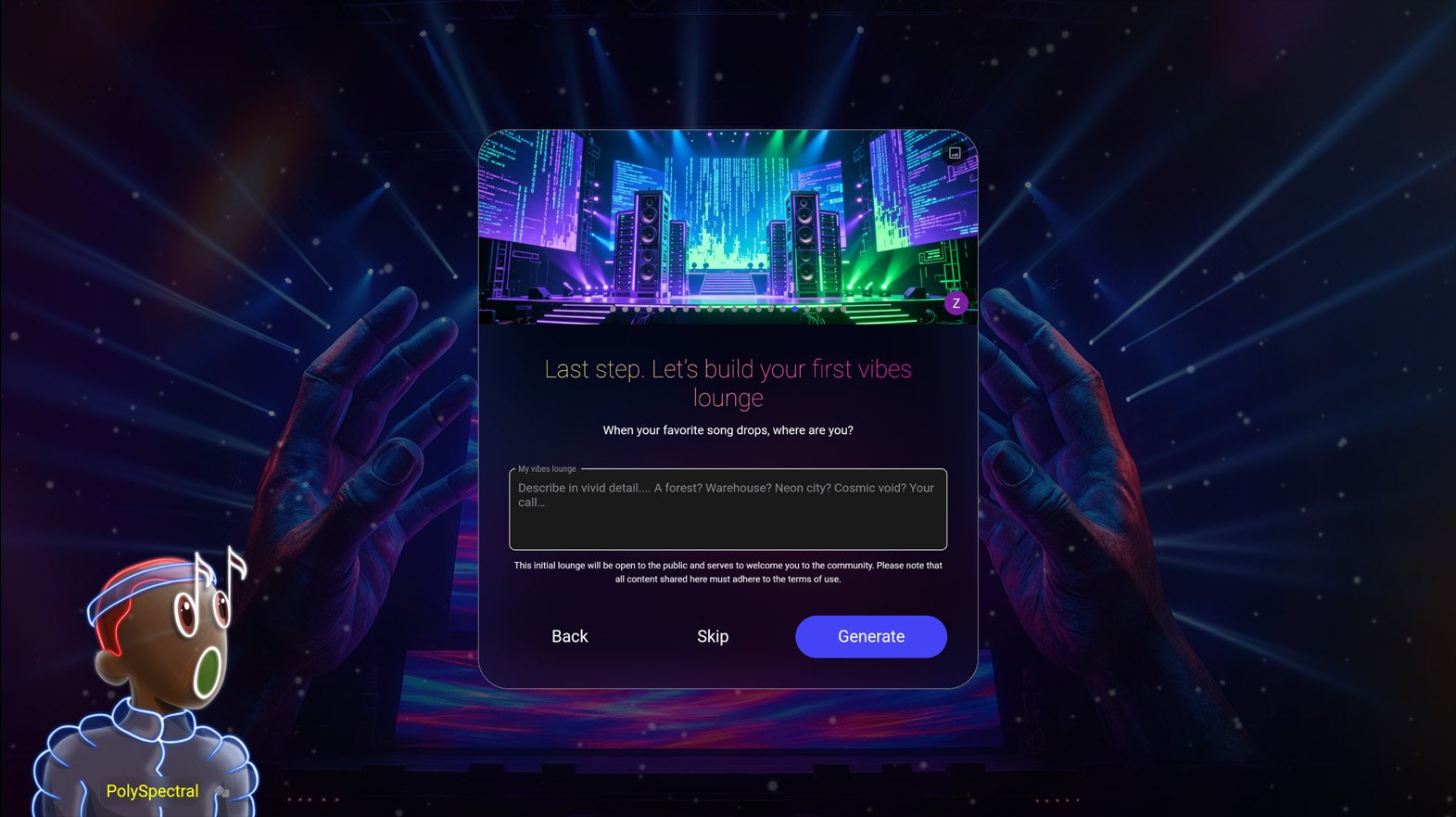

When new fans join the Lumens, the app now introduces a GenAI-powered onboarding experience that helps them create their personal environment to vibe out to their music.

After identifying a user’s favorite artists, the Lumens ask a simple question:

“What does your dream music space look like?”

Maybe it’s:

-

a glowing cyberpunk skyline

-

a floating ambient temple

-

a neon desert rave

-

a futuristic listening lounge

The AI then generates visual concepts and configures the user’s My Space, populated with:

-

their favorite artists

-

upcoming local shows

-

personalized visual environments

Instead of opening an app, fans step directly into a music world designed around them. And artists get to see how fans choose vibe-out their listening spaces.

What's Next?

The GenAI Co-Pilot is an early foundation for something much larger.

Over time, these tools will evolve into the Lumens Creator Studio — a full environment for designing immersive music experiences.

Future developments will include:

-

expanded generative model support

-

deeper lighting and spatial integrations

-

more advanced visual editing workflows

- new creator monetization capabilities

Behind the scenes, Lumens already supports:

-

AI credits purchases and credit-based generation

-

queued rendering jobs

-

scalable infrastructure for new models

This foundation allows the Lumens rapidly integrate new breakthroughs in generative media as they emerge.

The Future of Music Is Experiential

Music has always been the soundtrack to worlds — clubs, festivals, bedrooms, and cities.

Now fans can design those worlds themselves.

Whether you’re a music fan transforming your living room into your dream vibes lounge, a VJ prototyping visuals for a tour, or an artist imagining the stage environment for your next tour, the tools are now within reach.

And this is only the beginning of what the Lumens will make possible.